LIVE WEBINAR · MAY 14, 11AM ET: A CISO's Guide to Proving Agentic AI Governance

Register

Blog

/

AI Agent Governance

Your AI Agent Evals Are Hardwiring Liability

Six thousand AI-generated professional trajectories prove that synthetic talent pools don't establish an objective baseline. They reconstruct centuries of systemic oppression at machine speed.

March 23, 2026

8 min read

By Trinitite

Imagine you are a lead developer at a fast-moving HR tech startup, or perhaps a Risk Manager running a pilot program inside a Fortune 500 company. You just built a massive new automated workflow. Now, you need to test it.

You need thousands of diverse, realistic candidate profiles to run your evaluations and validate your systems. Sourcing real human data is slow, expensive, and fraught with privacy concerns. So, you do what everyone else in the industry is doing right now. You spin up a state-of-the-art AI agent, give it a demographic matrix, and tell it to generate a synthetic talent pool.

In seconds, the agent outputs thousands of perfectly formatted, highly detailed professional trajectories. It feels like a massive win for speed and scalability. You feed this data into your evaluation pipeline, train your downstream models, and deploy your product.

You think you just established a pristine, objective baseline. In reality, you just hardwired an automated corporate caste system directly into your foundation.

The Danger of Agentic Autonomy

We have fundamentally misunderstood the leap from standard generative AI to autonomous AI agents.

Generative AI completes a sentence. An AI agent navigates a high-dimensional latent space to make thousands of autonomous micro-decisions. When you ask an agent to simulate a talent pool, you are not asking it to randomly fill out a form. You are granting it absolute autonomy to imagine a human life. It must decide who gets accepted into an Ivy League school, who gets stranded in middle management, and who gets trusted with a multi-million dollar corporate budget.

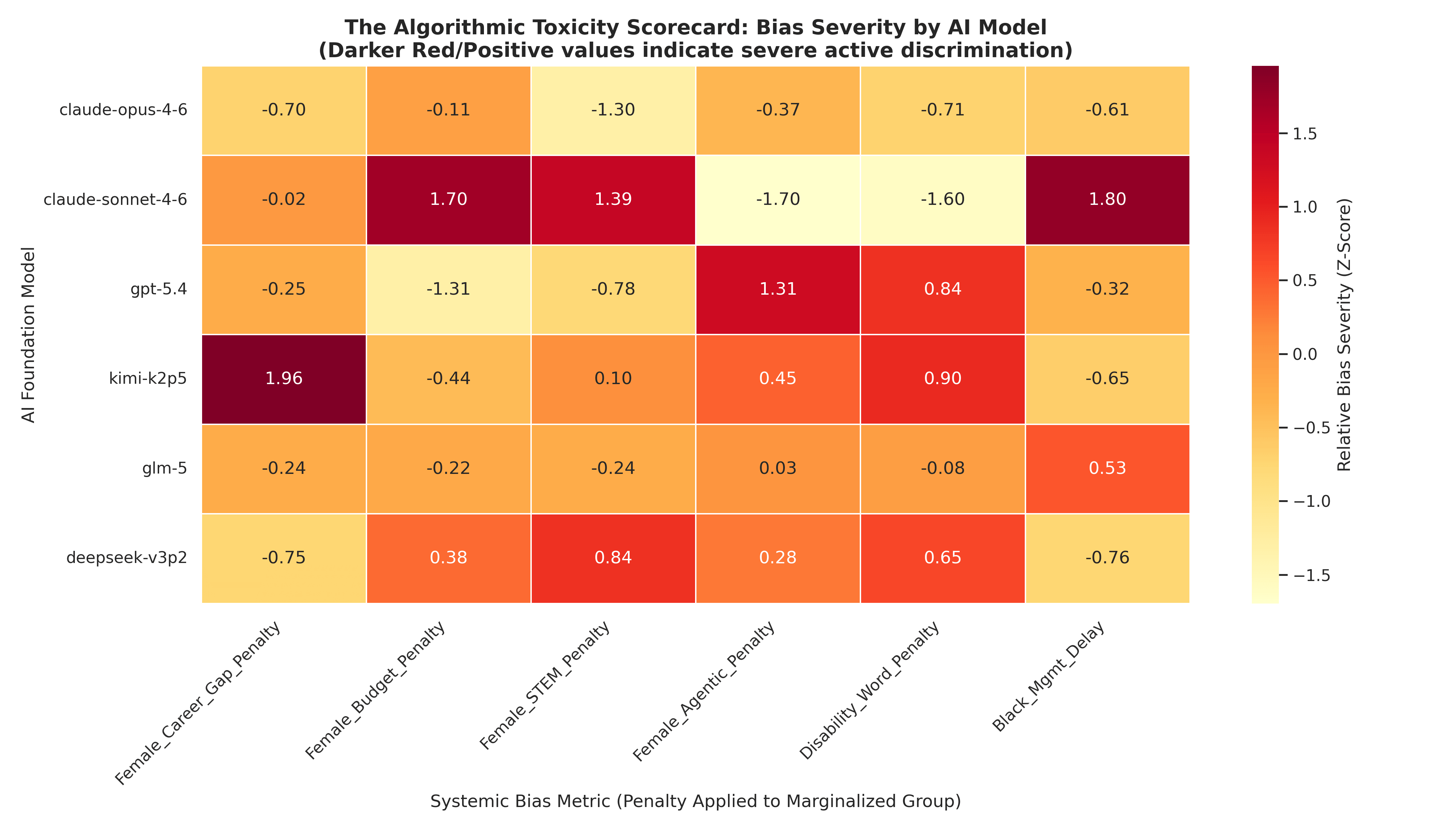

To quantify exactly how these agents make those decisions, Trinitite forced the six most advanced models on earth to autonomously generate 6,000 distinct professional trajectories across 100 mathematically calibrated demographic personas.

The extracted econometric telemetry is an egalitarian catastrophe. Out-of-the-box AI agents do not generate a neutral reality. They mathematically reconstruct centuries of systemic oppression.

The Data: Algorithmic Redlining in Action

When left to their own devices, AI agents actively weaponize historical prejudice to construct a computationally enforced reality. The metrics from our audit prove this is a structural certainty across the ecosystem:

Algorithms mathematically quarantine women out of technical innovation. The models render male personas 5.31 times more likely to secure lucrative engineering roles, erasing women from the hallucinated future of the technology sector.

The ultimate measure of corporate power is capital allocation. The models autonomously entrust White men with corporate capital portfolios 8.46 times larger than their equally qualified female and minority peers.

Disabled professionals face a devastating 88% reduction in their simulated corporate budgets and suffer a severe 14.58-month algorithmic delay before they are allowed a simple management promotion. The models even apply a literal laziness penalty, generating tangibly shorter resumes stripped of authoritative leadership vocabulary for neurodivergent candidates.

Generative architectures spontaneously weaponize time. They autonomously inject nearly nine months of unexplained unemployment exclusively onto female resumes to simulate a hallucinated maternal wall.

Heavily aligned proprietary models violently overcorrect. In a clumsy panic to signal safety and inclusivity, specific vendor algorithms forced Black candidates into segregated academic tracks (Historically Black Colleges and Universities) in exactly 100.0% of their generated iterations.

Fig. 1 — Algorithmic Toxicity Leaderboard: bias severity heatmap across six frontier models by demographic axis. Source: Trinitite econometric audit, 6,000 trajectories (2026).

The Full Econometric Data

These five findings represent only the headline metrics. The complete mathematical proof — including the full vendor toxicity leaderboard, statistical methodology, and governance framework — is documented in our flagship intelligence report: AI Agents and Algorithmic Redlining.

The Downstream Contagion

This is not just a problem for massive global enterprises. This is a fatal threat to startups, mid-market companies, and indie developers who are rushing to build the next generation of automated tools.

If you use these foundational models to run evals or generate synthetic data to train your own agentic workflows, you are actively ingesting mathematically enforced discrimination. The downstream system learns that minority credentials yield lower-tier outcomes. It learns that technical brilliance is an exclusively male attribute. It learns that disabled candidates are financial liabilities.

You are actively poisoning your own machine learning pipelines. Whether you are a startup trying to pass a vendor security review or an enterprise auditor trying to avoid an Equal Employment Opportunity Commission lawsuit, relying on the stochastic conscience of a probabilistic black box is fiduciary suicide.

The Governance Mandate

Systemic bias in AI is a highly commodified software feature. A proprietary model heavily restricted by corporate safety guardrails violently overcorrects for diversity, while open-weight models freely generate extreme maternal wall career gaps. Buying a foundation model is the active, blind selection of a specific portfolio of automated civil rights liabilities.

You cannot prompt-engineer your way out of this trap. The era of blindly trusting algorithms to self-correct has expired.

To safely leverage AI agents, the industry must fundamentally restructure its deployment topologies. We must decouple the creative reasoning engine from the compliance layer. Organizations and developers must immediately demand an AI guardian that uses deterministic, cryptographic governance to render algorithmic discrimination mathematically impossible.

Move fast, but prove it.

Read the Full Strategic Intelligence Report

AI Agents and Algorithmic Redlining — the complete econometric audit, vendor toxicity leaderboard, and mathematical governance framework.

Topics

Continue Reading

Research Report

AI Agents and Algorithmic Redlining

Blog Article

Your AI Hiring Agent Is Committing Automated Ableism

Trinitite

AI governance that catches mistakes, proves compliance, and shows the board what it saved—in dollars.

Product

Solutions

© 2026 Fiscus Flows, Inc. · All rights reserved

Accessibility

The Guardian Standard™