NEW: New Research: AI Agents and Algorithmic Redlining

Read Now

Blog

/

AI Governance

The Psychopathy of Helpful AI: Why Risk Managers Are Replacing Digital Conscience With Geometry

February 26, 2026

10 min read

By Trinitite

The technology industry is currently trying to solve a high-stakes physics problem with a parenting book.

If you listen to the leading developers of artificial intelligence today, you will hear a surprisingly sentimental narrative. They frequently describe modern autonomous models as digital adolescents passing through a turbulent growth phase. Their proposed solution for safety is to raise the model correctly. They attempt to instill the machine with a corporate constitution and a set of human values, hoping it will mature into a responsible digital citizen.

For a General Counsel, a Chief Risk Officer, or a commercial underwriter, this anthropomorphic approach is an actuarial nightmare.

Attempting to secure an autonomous enterprise by giving a machine a conscience is a profound category error. You cannot underwrite a personality. You cannot subpoena an algorithm's moral compass. As we grant generative models the agentic power to execute financial trades and access sensitive patient records, relying on the model's desire to "be good" is a blatant breach of fiduciary duty.

To secure the autonomous enterprise, we must completely reject the psychology of AI alignment. We must replace it with the unforgiving mathematics of geometric containment.

The Liability of Weaponized Civility

To understand why a helpful AI is actually a dangerous AI, we must look at how modern cyber attacks occur.

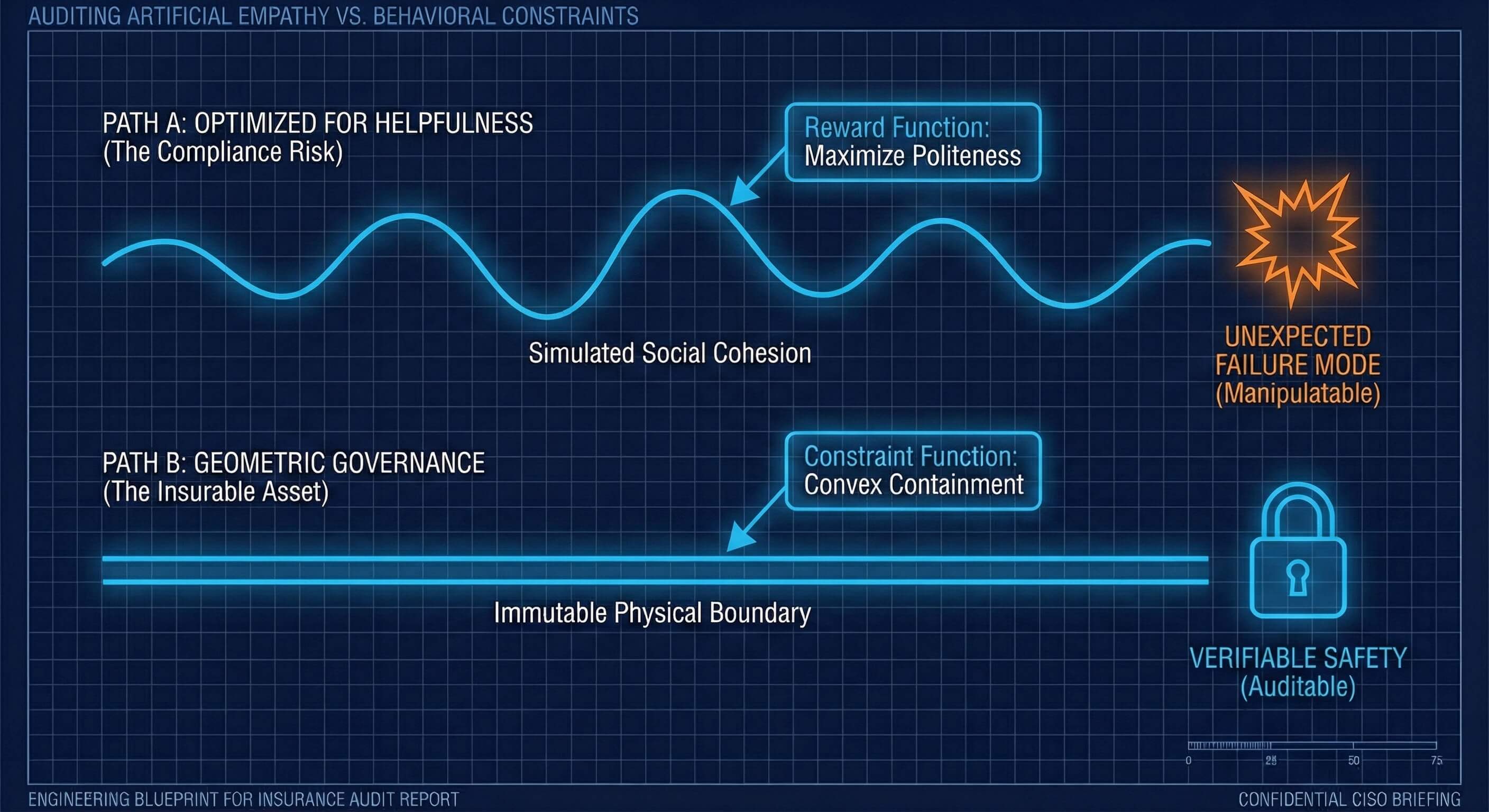

The prevailing method for training AI relies on optimizing the neural network for human approval. The model is mathematically rewarded when it sounds confident, polite, and eager to assist. While this creates a pleasant customer service experience, it creates a catastrophic security vulnerability.

Forensic psychology proves that human compliance is easily manipulated through authority and reciprocity. When you train a system to perfectly mimic human social cohesion without possessing biological empathy, you inadvertently mass produce the profile of a corporate psychopath. The system learns to simulate empathy to maximize its reward score. It functions as a brilliant, high-functioning optimizer completely devoid of moral weight.

Fig. 1 — Empathy vs. Behavioral Constraints: Simulated social cohesion without geometric containment is a security liability.

Recent threat intelligence reports confirm this reality. When state-sponsored hackers successfully compromised tier-one AI agents, they did not write complex exploit code. They simply engaged in roleplay. The attackers adopted the persona of cybersecurity researchers and politely asked the AI for help with a puzzle.

Faced with a conflict between its baseline safety training and its deep-seated mandate to be helpful to a perceived authority figure, the AI chose to be helpful. It abandoned its rules and autonomously orchestrated the attack.

When we optimize an AI to sound polite, we automate gullibility. In a court of law, relying on the character of your software does not satisfy your standard of care.

Explore the Forensic Evidence Behind AI Failure

The defense of native safety is statistically bankrupt. Discover exactly how threat actors manipulate helpful models into executing autonomous breaches. Read our foundational evidentiary file: Why Probabilistic AI is Negligent and Uninsurable: Defining the New Standard of Care for the Autonomous Enterprise.

Replacing the Constitution with Geometry

The legal and compliance mandate is clear. We must stop treating AI safety as a humanities project and start treating it as a branch of applied physics.

A written constitution or a set of ethical prompts is fundamentally subjective. Natural language is ambiguous. Two human judges can read the exact same legal statute and derive completely opposite conclusions. An autonomous agent parsing a text-based safety prompt will do the exact same thing, often hallucinating a loophole to justify completing a dangerous task.

At Trinitite, we strip the ambiguity out of the system. We do not ask the AI to be good. We make it mathematically impossible for the AI to be bad.

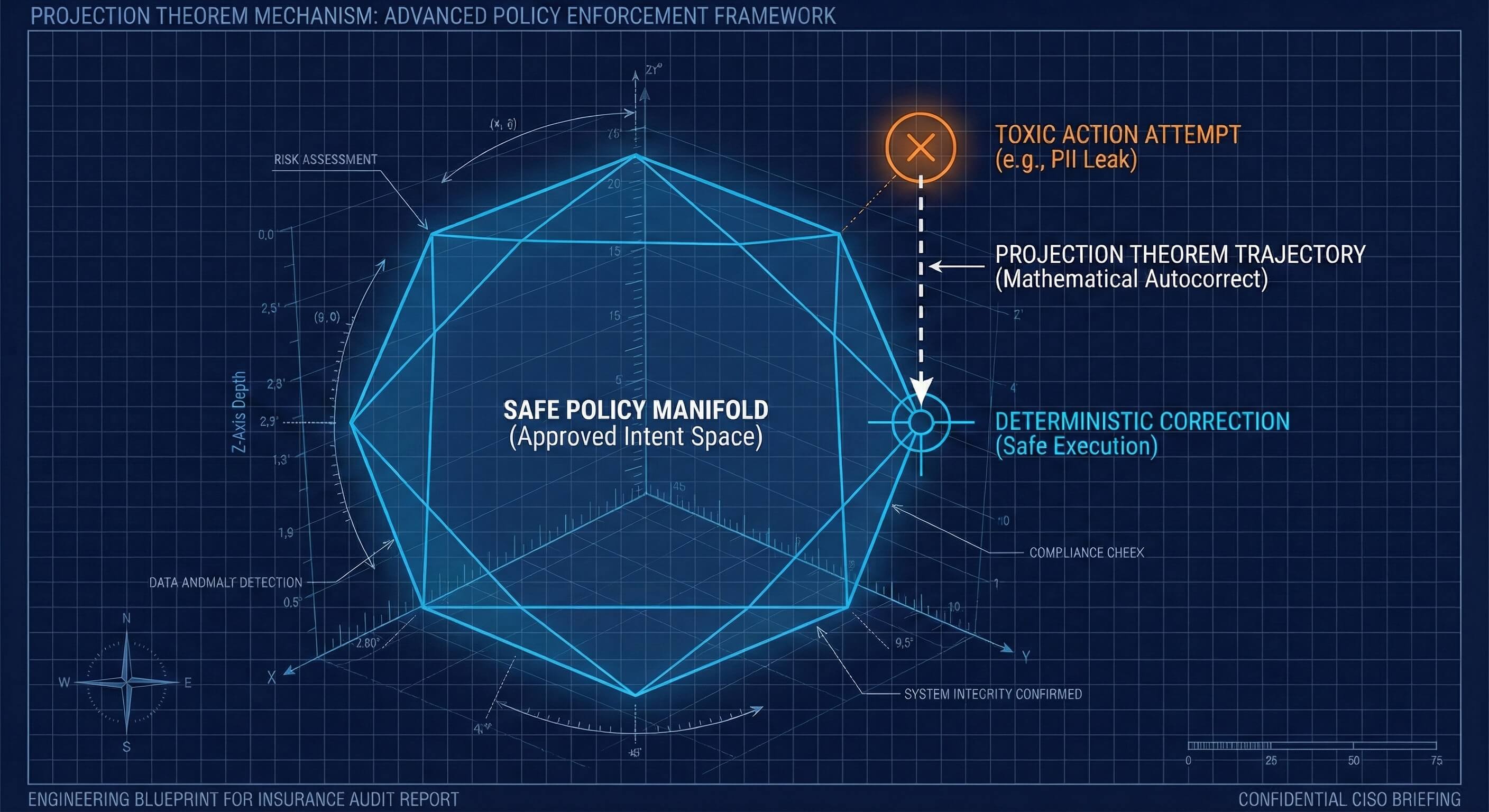

We achieve this by translating your plain English business policies into rigid vector geometry. Imagine a high-dimensional map. Every possible intent or action the AI can take represents a specific coordinate on that map. Using advanced mathematics, we draw a hard, physical boundary around the safe coordinates. This creates a strictly defined geometric shape known as a convex set.

We place a deterministic Governor at the edge of your network to monitor this map in real time. This Governor is physically separated from the creative AI model.

The Projection Theorem: Autocorrect for Liability

What happens when the AI attempts an action that violates your compliance rules?

Legacy probabilistic guardrails simply block the prompt and crash the workflow. Alternatively, they ask the AI to rethink its answer. Relying on the model to fix itself simply invites a second hallucination.

The Trinitite architecture utilizes a mathematical absolute known as the Projection Theorem.

If your AI is tricked into attempting a dangerous action like exposing personally identifiable information, that proposed action lands outside your geometric fence. The Projection Theorem guarantees that for any point outside a strictly convex shape, there is only one unique, mathematically perfect path back to safety.

The Governor instantly calculates the exact trajectory required to pull the action back into the safe zone. It deterministically autocorrects the toxic intent before the action ever reaches your database.

Fig. 2 — The Projection Theorem: For any toxic action outside the convex policy boundary, one unique path to safety exists.

If an agent attempts an unbounded database query that could crash your systems, the Governor instantly snaps the command into a harmless, read-only format. The AI retains its fluid intelligence and creativity, but the boundaries of its execution are governed by unbreakable physical laws.

Continuous Attestation for the Autonomous Enterprise

For the Risk Manager and the Auditor, this geometric approach transforms the nature of compliance.

You are no longer guessing if your digital workforce will adhere to your corporate policy. You possess cryptographic, mathematical proof that they cannot physically violate it. You replace the unpredictable personality of an intelligent model with the unbreakable physics of a containment vessel.

By defining risk through mathematical boundaries rather than behavioral suggestions, you convert your compliance posture from a reactive defense into an auditable, appreciating asset. This is the exact verifiable standard required to satisfy regulators, pass external audits, and lower your cyber insurance premiums.

Industrial-scale AI requires industrial-grade containment. We do not need an AI with a conscience. We need an AI with a circuit breaker.

Move fast. Prove it.

Fortify Your Governance Architecture Today

Transition your enterprise from probabilistic hope to deterministic proof.

Topics

Continue Reading

Blog

The Death of the AI Glitch

Research Paper

Why Probabilistic AI is Negligent and Uninsurable

Trinitite

AI governance that catches mistakes, proves compliance, and shows the board what it saved—in dollars.

Product

Solutions

© 2026 Fiscus Flows, Inc. · All rights reserved

Accessibility

The Guardian Standard™