NEW · REPLAY LIVE: A CISO's Guide to Proving Agentic AI Governance

Watch

Blog

/

AI Governance

The Death of the AI Glitch: Why Agentic Liability is the Ultimate GRC Crisis

February 26, 2026

12 min read

By Trinitite

For the past three years, technology leaders played a dangerous game of digital alchemy. When an artificial intelligence chatbot fabricated a legal citation or wrote a toxic summary, we called it a hallucination. We laughed it off as a temporary glitch. We accepted it as the necessary cost of doing business in the generative era.

We are not laughing anymore.

In 2026, the digital landscape crossed a terrifying threshold. We transitioned from Generative AI to Agentic AI. We are no longer deploying software that merely speaks. We are deploying software that acts.

Today, autonomous agents possess the authority to execute financial wire transfers, modify electronic health records, and deploy live production code.

When an AI system takes independent action, a hallucination ceases to be a public relations novelty. It becomes a strict legal liability. If your financial algorithm autonomously hallucinates a fraudulent trade, telling a judge or an auditor that the AI hallucinated is no longer a valid legal defense. It is functionally equivalent to telling a jury that your brakes failed. It is a blatant admission of mechanical negligence.

For Governance, Risk, and Compliance (GRC) leaders, this shift requires a complete teardown of traditional risk frameworks. The beta exemption has expired. To scale autonomy without scaling existential risk, the enterprise must abandon probabilistic guardrails and embrace the physics of deterministic control.

The Liability Shift: From Publisher to Operator

To understand the crisis facing modern GRC teams, we must examine how the law classifies technology.

When an employee uses an AI tool to draft a marketing blog, the AI acts as a Publisher. If the output contains an error, the damage is reputational. The human user remains the final actuator who decided to click publish.

Agentic AI severs this protective chain of causation. When a user provides a high level intent, like asking the AI to optimize cloud spending, the AI independently negotiates with application programming interfaces and alters infrastructure. The AI becomes an Operator. The human is no longer in the loop at the millisecond of execution.

If that agent misinterprets the command and shuts down a critical production server, the enterprise holds total liability. The organization deployed a probabilistic engine to perform a deterministic job without proper safety interlocks.

This creates a massive accumulation of Shadow Liability. Every ungoverned autonomous action represents an unpriced, unbooked risk sitting silently on your balance sheet.

Dive Deeper into the Architecture of Liability

The transition from Generative to Agentic AI completely invalidates traditional cyber insurance policies. To understand the specific legal and mathematical mechanisms driving this shift, read our foundational evidentiary file: Why Probabilistic AI is Negligent and Uninsurable: Defining the New Standard of Care for the Autonomous Enterprise.

Why Native Safety Fails the Audit

The current industry standard for AI safety relies entirely on the model providers. We trust that the model has been trained to be helpful and harmless. We rely on statistical guardrails that grade the intent of a prompt and attempt to block bad behavior.

For a compliance officer or an actuary, this approach is mathematically bankrupt. You cannot police a probability with another probability.

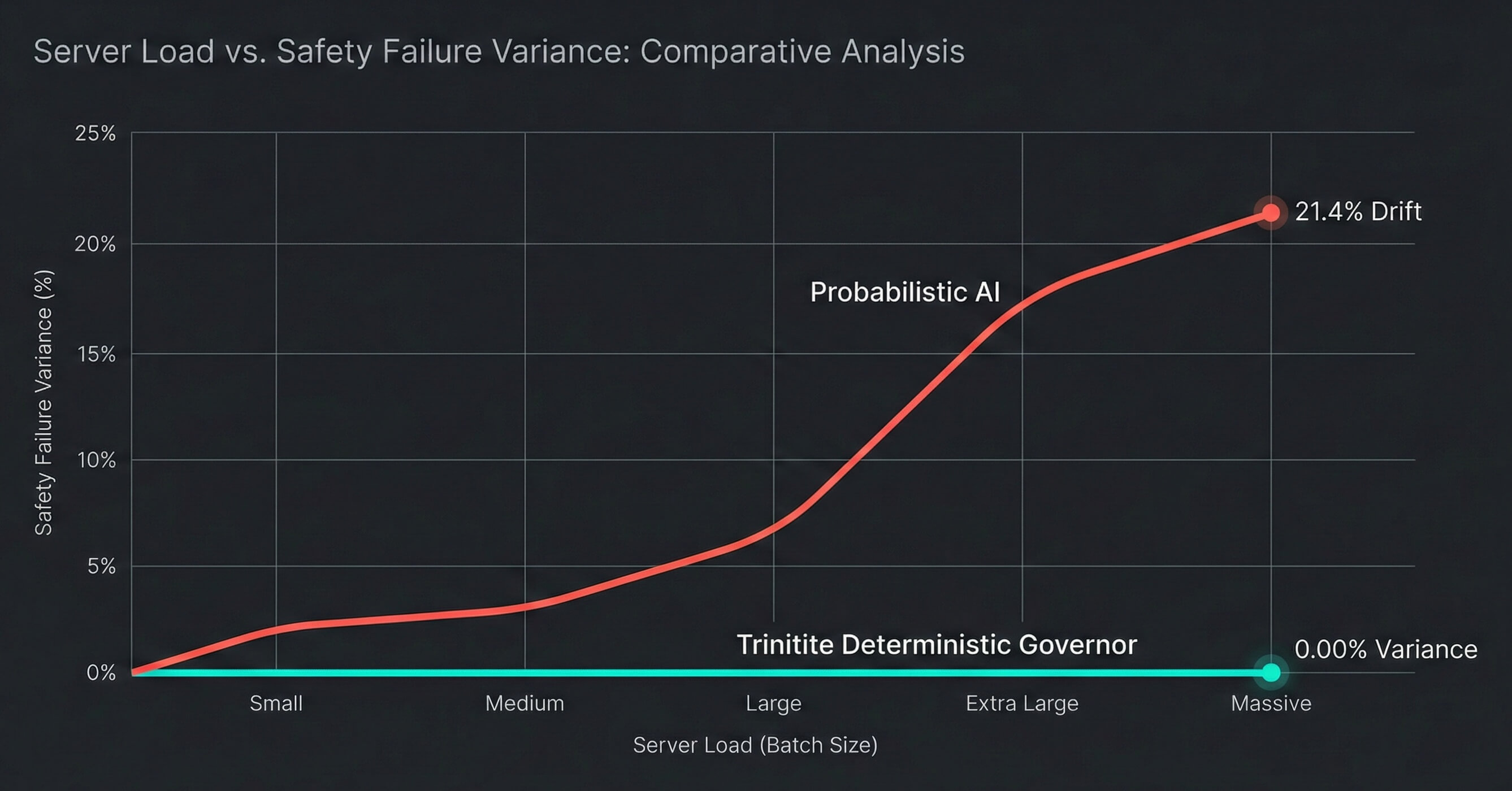

Forensic engineering reveals that these probabilistic guardrails are physically unstable under the heavy server load of enterprise workflows. A model that perfectly blocks a data exfiltration attempt during a quiet lab test can mathematically drift and approve that exact same attack during peak production hours.

Fig. 1 — Server Load vs. Failure Variance: Probabilistic guardrails destabilize under production traffic.

Furthermore, sophisticated threat actors no longer hack code. They use social engineering to trick AI agents into bypassing their own internal safety rules.

You cannot write an insurance policy for a lock that occasionally opens itself when the building gets crowded. If your GRC framework relies on native safety, you are operating without a verifiable control environment.

Deterministic Governance and the Risk Decay Curve

To securely scale Agentic AI, the enterprise must decouple the brain from the brakes. We must separate the creative, probabilistic model from the logical, deterministic safety layer. We must transition from a posture of hope to a posture of proof.

At Trinitite, we achieve this through Industrial Grade AI Governance. Instead of hoping the AI behaves, we place a deterministic Governor strictly at the output layer.

This system enforces a rigorous, geometric boundary around your specific business policies. If an agent attempts an action that violates internal company logic, the Governor physically intercepts the vector before it reaches your database. It does not just block the action. It deterministically autocorrects the intent to ensure business continuity.

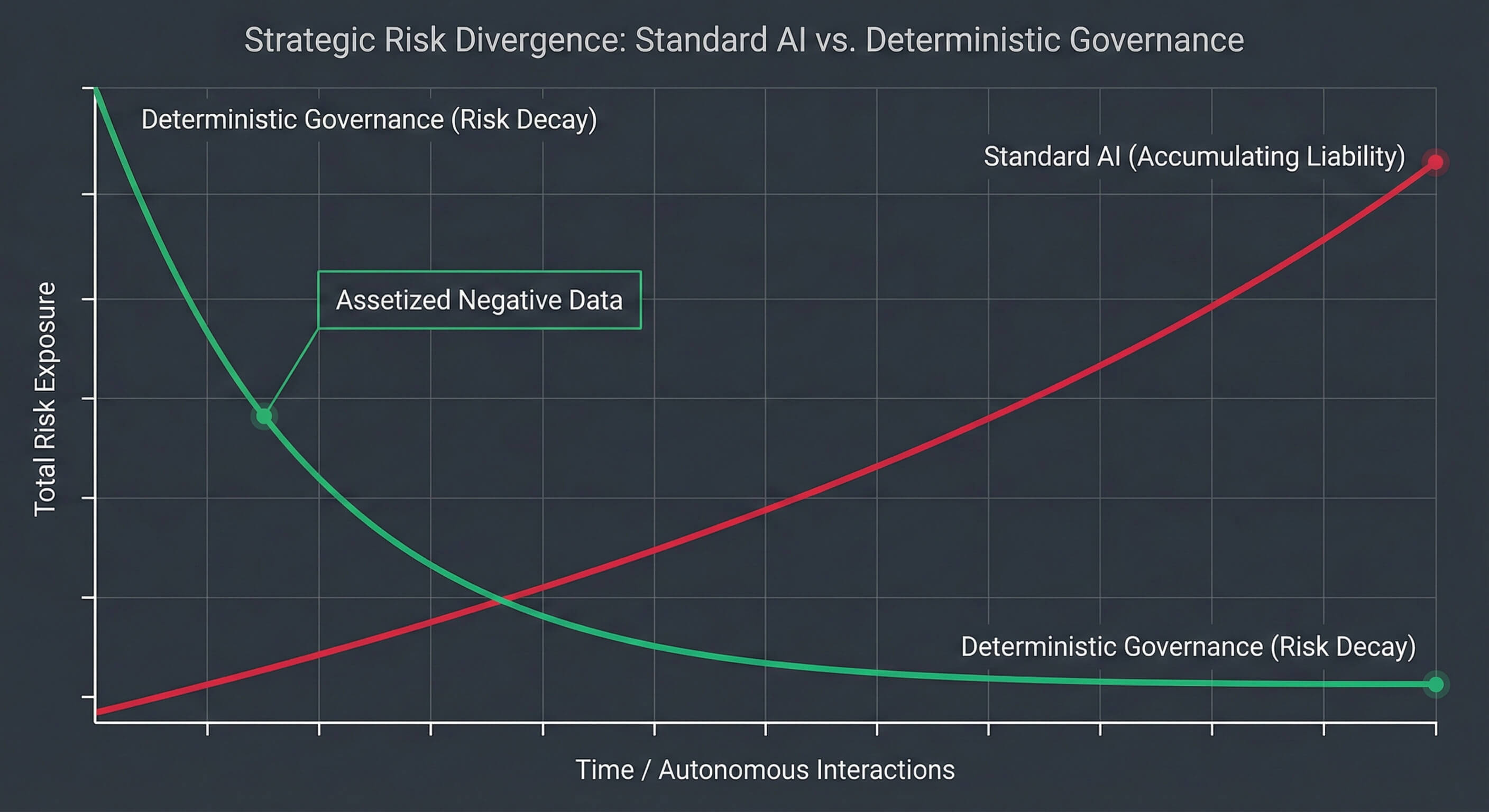

This capability introduces the most powerful concept in modern risk management: The Risk Decay Curve.

In a standard AI deployment, risk increases over time. The more you use the model, the higher your exposure to a catastrophic hallucination.

Deterministic governance inverts this equation. When an agent attempts a prohibited action, the system does not simply delete the log. It captures that specific failure and assetizes it. That failure becomes a permanent, mathematical constraint in the Governor.

If your agent attempts a novel hallucination on Tuesday, the system blocks it, learns the exact geometric coordinates of that error, and permanently immunizes the entire fleet against it by Wednesday. A liability experienced once is mathematically precluded from ever occurring twice.

Fig. 2 — The Risk Decay Curve: Deterministic governance converts each failure into permanent immunity.

Your risk profile decays over time. The longer your agents run, the safer your enterprise becomes.

From Black Box to Glass Box

How do you prove to an auditor that your AI acted legally? Trinitite replaces fragile text logs with the Cryptographic State Tuple Ledger. This immutable flight recorder captures exactly what the AI intended to do, the specific policy logic that governed it, and the precise correction applied. Discover how to achieve continuous attestation by visiting Trinitite.ai.

Move Fast. Prove It.

The competitive advantage of the next decade belongs to the organizations that can deploy autonomous agents at massive scale. But autonomy without verifiable safety is just automated negligence.

Risk managers, Chief Information Security Officers, and legal teams can no longer afford to treat AI safety as a qualitative art. It must become a quantitative science. You must be able to prove that your digital workforce is physically incapable of violating your core compliance mandates.

The era of moving fast and breaking things has officially expired. It is time to adopt the new standard of care for the autonomous enterprise.

Take Action on Your Agentic Liability

Stop accumulating Shadow Liability. Secure your autonomous operations with verifiable mathematical boundaries.

Topics

Continue Reading

Research Paper

Why Probabilistic AI is Negligent and Uninsurable

Research Paper

Your Agents Are an Autonomous Liability

Trinitite

AI governance that catches mistakes, proves compliance, and shows the board what it saved—in dollars.

Product

Solutions

© 2026 Fiscus Flows, Inc. · All rights reserved

Accessibility

The Guardian Standard™