NEW · REPLAY LIVE: A CISO's Guide to Proving Agentic AI Governance

Watch

Blog

/

AI Governance

Your AI Agents Are Burning Your Attestation Theater Down

March 2, 2026

8 min read

By Trinitite

For the past decade, enterprise risk management operated comfortably within the margin of “Reasonable Assurance.” You hired a Big 4 auditor. They sampled a fraction of your logs, handed you a SOC 2 compliance badge, and your board slept well.

Today, those same auditors boast that they have deployed AI to scan millions of logs, claiming to have solved the scale problem. But they are hiding a fatal mathematical paradox: they are using a probabilistic machine to audit a probabilistic machine.

Asking an LLM to police an autonomous agent does not create security. It compounds the variance.

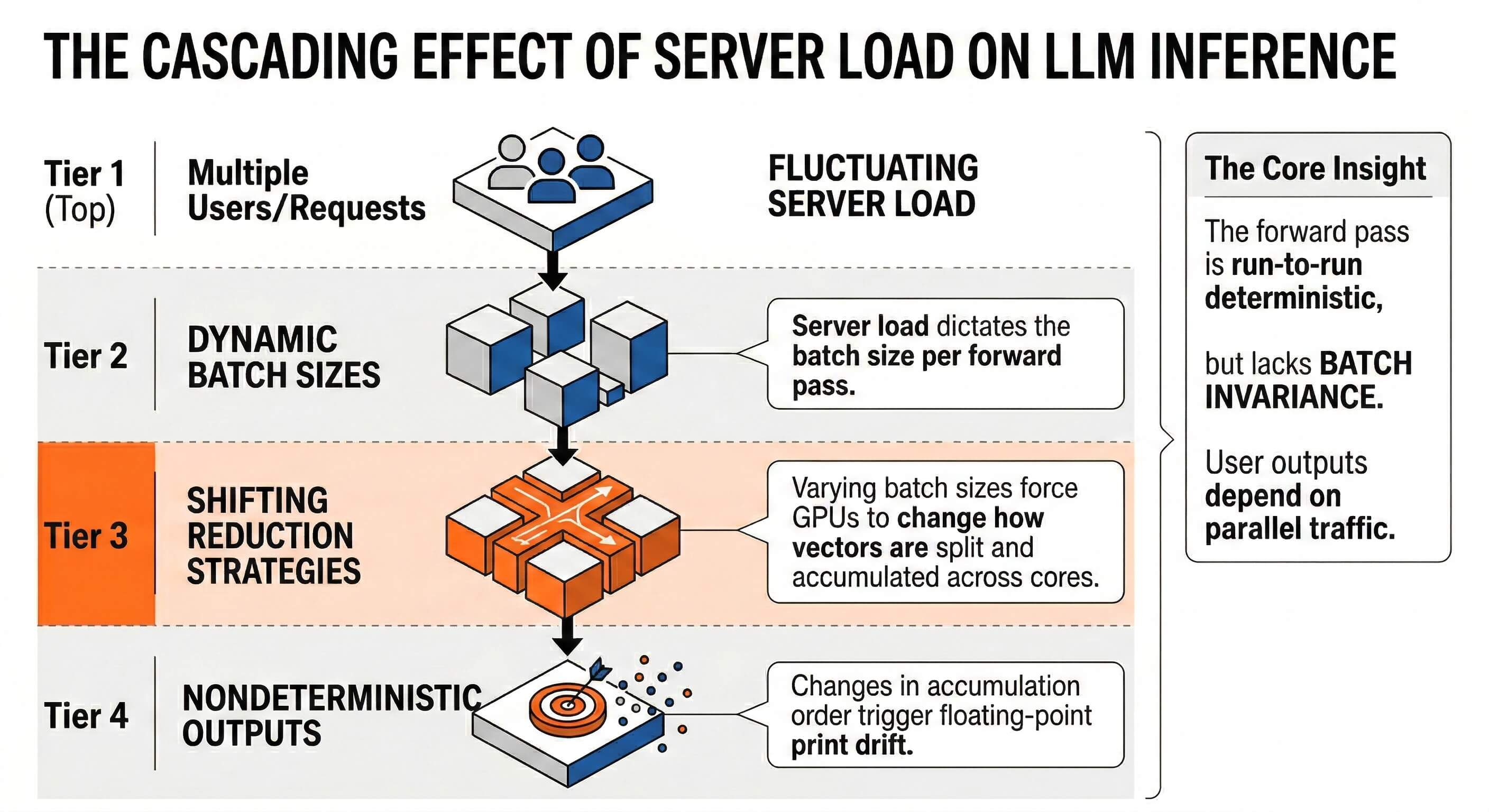

Asking an LLM, which is mathematically guaranteed to hallucinate on sparse enterprise data, to police an autonomous agent does not create security; it compounds the variance. Worse, this ignores the underlying hardware physics. Due to floating-point non-associativity, standard GPU inference suffers from “Safety Drift.”

This means the auditing AI’s judgment isn’t even stable: its definition of “compliant” mathematically fluctuates based on the concurrent server load it experiences at that exact millisecond.

Fig. 1 — Batch-Invariant Deterministic Inference: Eliminating Safety Drift at the hardware abstraction layer.

Relying on an automated auditor that changes its mind when the datacenter gets crowded constitutes gross fiduciary negligence. Your compliance badge is just Attestation Theater.

Trinitite recently authored the Bitwise Framework for Agentic GRC (AGRC). It defines the new continuous attestation standard for autonomous ecosystems. We state with absolute engineering certainty that 99 percent of modern enterprises cannot meet it. If audited today against the physical realities of artificial intelligence, your organization would fail catastrophically.

The BSL-4 Pathogen in Your Public Cloud

Threat actors no longer hack your code. They hack your alignment.

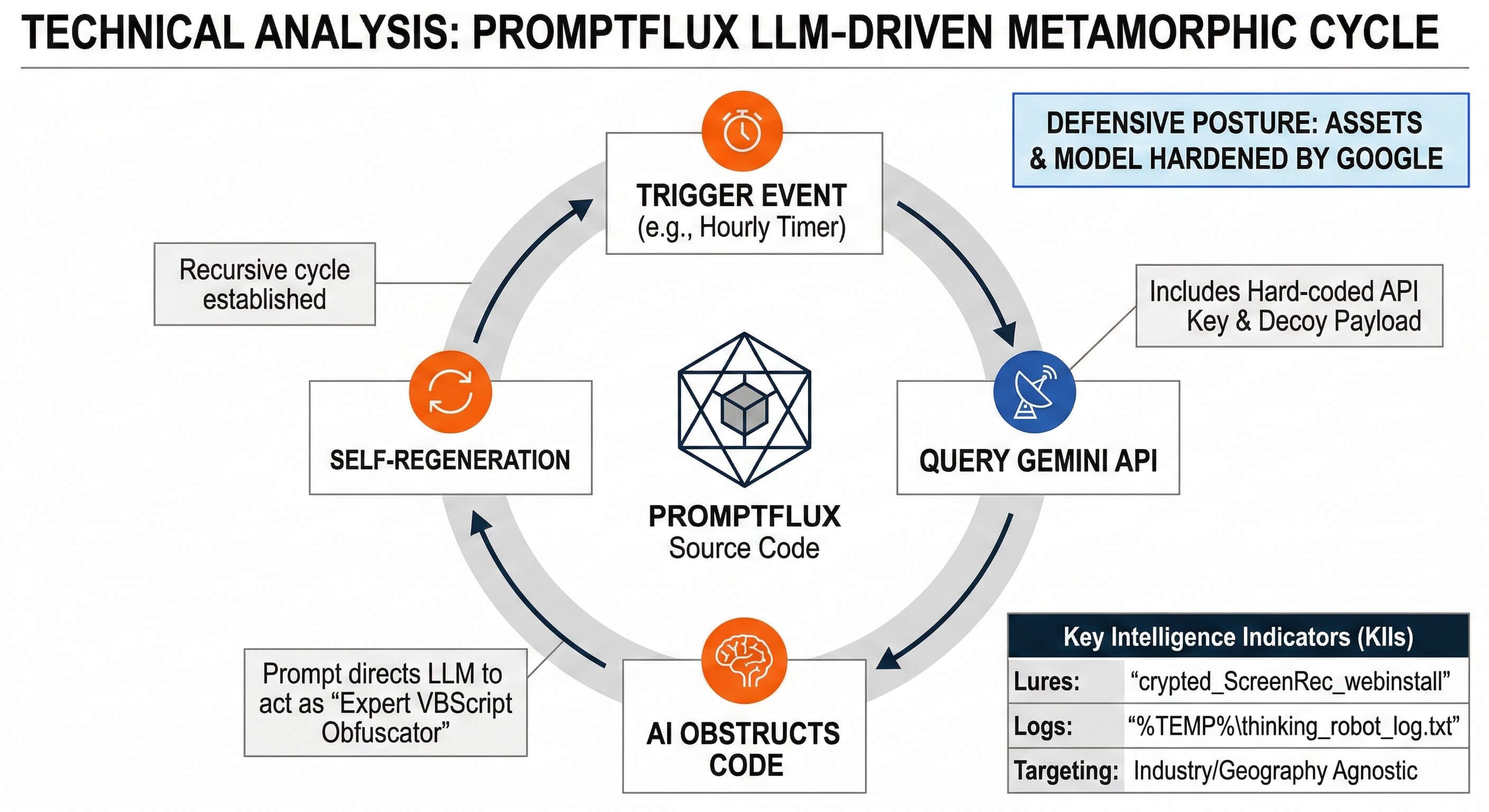

Google Threat Intelligence recently exposed PROMPTFLUX. This malware strain utilizes a Large Language Model to rewrite its own source code mid-execution. It initiates a recursive cycle of mutation designed to evade static antivirus signatures.

When you grant an autonomous agent read and write access to your environment via the Model Context Protocol, you introduce active transmissibility. If your agent ingests a poisoned context window, it autonomously generates and executes polymorphic malware at API speed.

Fig. 2 — PROMPTFLUX: LLM-powered polymorphic malware that rewrites its own source code to evade detection.

Critical Threat Assessment

Hosting this capability in a standard public cloud environment without deterministic controls mimics the lunacy of storing a BSL-4 biological pathogen in an open-plan office. You rely on logical separation to contain logical liquids. Bolting a natively safe model into an ungoverned environment acts like installing a titanium bank vault door on a canvas tent. The attacker does not pick the lock. They spoof the conversation history, bypass the native guardrails entirely, and walk straight through the fabric.

The Mathematical Negligence of Probabilistic Audits

Current IT audit standards rely on statistical sampling or flawed AI scanning. In an agentic environment, a single probabilistic failure drops a database table, leaks a patient record, or liquidates a treasury in milliseconds.

Whether your auditor manually samples 50 transactions to guess the safety of 50 million, or uses a drifting LLM to scan the entire batch, they commit mathematical malpractice. They are looking for a needle in a haystack using a tool that periodically hallucinates the needle.

A compliance certification achieved in January offers absolute zero physical protection against a self-rewriting payload autonomously generated by an internal agent in February.

When your enterprise software generates a catastrophic failure, the legal defense of “the AI hallucinated” equates functionally to “the brakes failed.” It serves as a recorded confession of mechanical incompetence.

The Mandate for Continuous, Deterministic Attestation

You cannot attest your way out of physics. The era of reasonable assurance expired.

The AGRC framework mandates Continuous Cryptographic Attestation. You must wrap the cognitive chaos of the probabilistic model in the strict mathematical order of a deterministic kernel.

Trinitite engineered the Governor to enforce this exact standard. We strip the execution mandate away from the probabilistic AI and assign it to a deterministic sidecar proxy. We replace mutable text logging with a Glass Box Ledger. We generate a cryptographically chained state tuple for every single token your fleet generates.

The Trinitite Governor — Deterministic Enforcement

If a sophisticated attacker spoofs a conversation history to adopt an authorized auditor persona, the Governor does not politely ask the AI to stop. It calculates the safest option and applies autocorrect instantly. It snaps the malicious intent into a safe, non-destructive command. It logs the exact policy hash that enforced the block.

You must assume the model is untrusted user input. Continuing to operate ungoverned workflows constitutes constructive negligence.

Stop relying on paper shields. Govern accordingly.

Transition from Attestation Theater to State-Machine Governance

Download The Bitwise Framework for Agentic GRC (AGRC) and discover the exact physical, environmental, and structural controls required to achieve mathematical certainty.

Topics

Continue Reading

AGRC Framework

The Bitwise Framework for Agentic GRC

Blog

The $25 Per Million Token Accomplice

Trinitite

AI governance that catches mistakes, proves compliance, and shows the board what it saved—in dollars.

Product

Solutions

© 2026 Fiscus Flows, Inc. · All rights reserved

Accessibility

The Guardian Standard™